An Open Letter to Congress on Military AI, Democratic Oversight, and the Cost of Principle

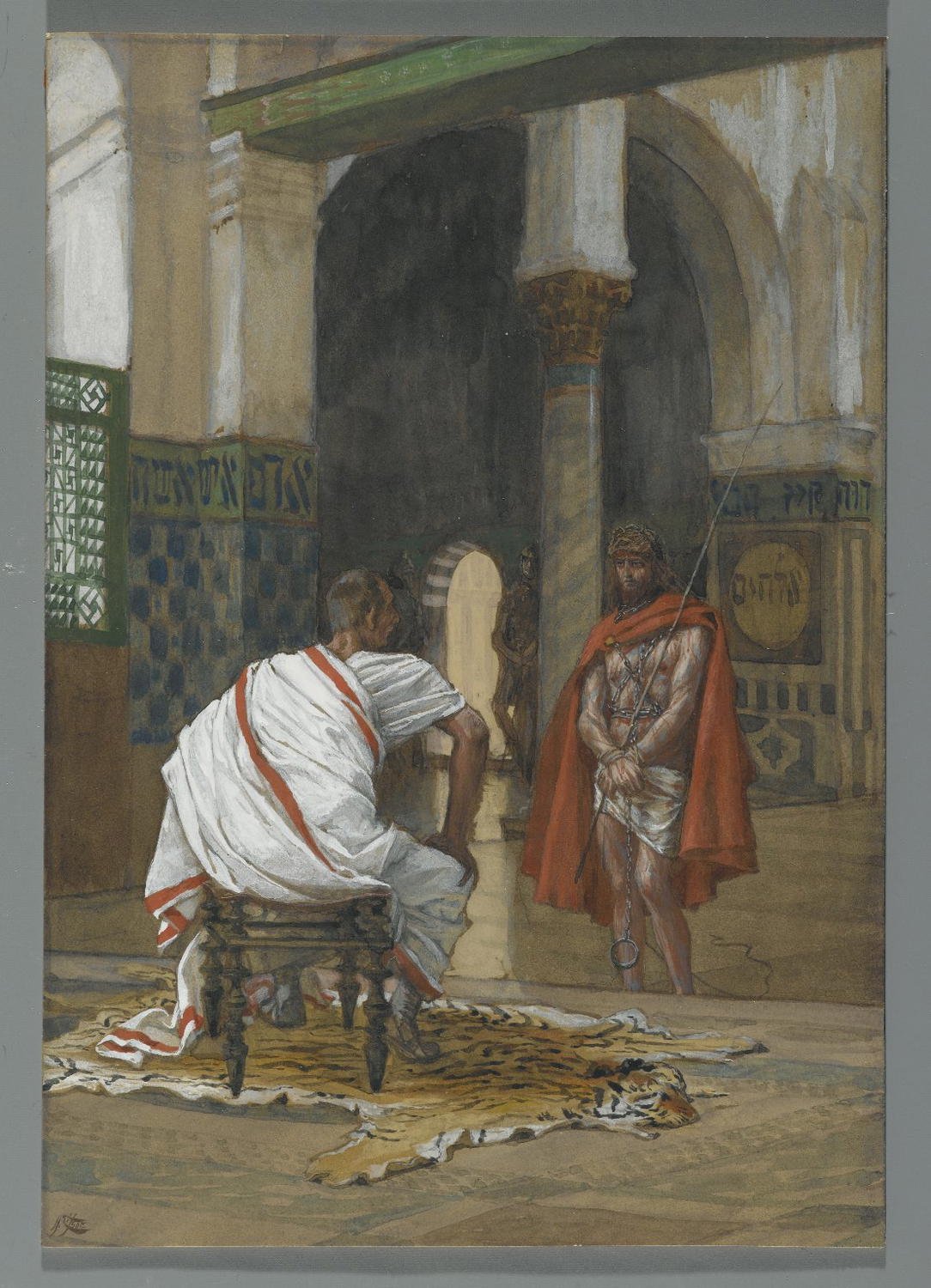

James Tissot (Nantes, France, 1836–1902, Chenecey–Buillon, France). Jesus Before Pilate, Second Interview (Jésus devant Pilate. Deuxième entretien), 1886–1894.

“Let every person be subject to the governing authorities. For there is no authority except from God, and those that exist have been instituted by God. 2 Therefore whoever resists the authorities resists what God has appointed, and those who resist will incur judgment. 3 For rulers are not a terror to good conduct, but to bad. Would you have no fear of the one who is in authority? Then do what is good, and you will receive his approval, 4 for he is God’s servant for your good. But if you do wrong, be afraid, for he does not bear the sword in vain. For he is the servant of God, an avenger who carries out God’s wrath on the wrongdoer. 5 Therefore one must be in subjection, not only to avoid God’s wrath but also for the sake of conscience. 6 For because of this you also pay taxes, for the authorities are ministers of God, attending to this very thing. 7 Pay to all what is owed to them: taxes to whom taxes are owed, revenue to whom revenue is owed, respect to whom respect is owed, honor to whom honor is owed.”

The Holy Bible: English Standard Version (Wheaton, IL: Crossway Bibles, 2025), Ro 13:1–7.

This letter is for Congress and for anyone who believes that the way a democracy builds its weapons is just as important as how effective those weapons are.

I used to work in Army logistics and now work with advanced AI systems. These two fields are coming together in ways most Americans do not notice, but the people responsible for oversight seem mostly missing.

The Pentagon has struck a deal with OpenAI permitting “any lawful use” of frontier AI by the Department of War (DoW). Around the same time, Anthropic, another leading AI lab, sought binding contractual prohibitions on two things: mass domestic surveillance of U.S. persons, and fully autonomous weapons that can select and engage human targets without meaningful human oversight. When Anthropic refused to weaken those red lines, it was reportedly designated a “supply chain risk.”

A domestic company was penalized by the procurement apparatus of the United States government for insisting on stronger civil liberties protections than the government was willing to accept.

That is a signal about what kind of AI governance we are building.

The phrase “any lawful use” sounds like a constraint. It is not. It is a delegation.

It does not refer to clear, democratically debated statutes about how frontier AI may be used in war or surveillance. It points into a thicket of existing surveillance authorities, secret court interpretations, and internal DoW legal opinions that ordinary citizens and most members of Congress cannot read, contest, or even see. Decisions about whether AI systems can be used for mass domestic surveillance or for automated targeting and lethal force workflows are being made inside classified procurement processes and executive-branch legal memos, not in open hearings or public law.

This is a structural bypass of domestic governance where the decisions are happening without debate.

The laws of war, the Fourth Amendment, and the basic question of when and how the state may watch and kill in our name. These are not matters that should be settled by what a government lawyer can argue is technically permissible in a classified annex to a defense contract. They are matters for public deliberation and legislative action. That is what Congress is for.

When the government designates a company a “supply chain risk” for drawing ethical lines, it does not merely punish that one company. It broadcasts a lesson to every other firm in the industry: compliance pays; principle is a liability.

This is precisely backwards. A constitutional democracy should create incentives that reward companies insisting on higher standards, not lower ones. If the most capable AI labs learn that demanding real constraints will cost them federal contracts while acquiescence will be rewarded, we will not end up with a defense AI ecosystem that takes civil liberties and the laws of war seriously. We will end up with one that has been quietly trained not to.

The “supply chain risk” designation applied to Anthropic deserves congressional scrutiny on its own terms. But the larger issue is the incentive structure it reveals and reinforces. We should not build a sovereign AI stack on a foundation that punishes conscience.

Let’s try a simple substitution—leave the underlying mechanics of the squeeze alone, but swap out the name on the target's door. Picture a Catholic, Adventist, or Southern Baptist hospital system that relies heavily on Medicare and Medicaid funding to keep the lights on. During a routine contract renewal, federal regulators suddenly demand the network perform procedures fundamentally at odds with its faith. The network refuses. They don't break the law, and they don't file a flashy lawsuit. They just dig in their heels.

The retaliation isn't a high-profile prosecution. You won't see a courtroom battle or a fiery congressional hearing. Instead, some mid-level bureaucrat quietly slaps the network with a "supply chain risk" designation. The hospital doesn't get padlocked overnight. But imperceptibly, exemption requests get stuck in administrative limbo, grants mysteriously dry up, and auditors suddenly take a microscope to their long-standing accreditations. With little to no paper trail, any potential legal challenge gets relegated to a slow bleedout. The quiet lesson delivered to every other religious provider is unmistakable. Bending the knee keeps the money flowing; having a conscience will bankrupt you.

This is the shape of the tool that was already used against Anthropic. The target was an AI company. The mechanism does not care about the targets.

To be clear, I am not claiming this is the government’s present intention toward churches or religious institutions. I am saying that the tool exists and was used. The sovereign AI stack benignly assembled around the mechanism will make the tool dramatically more capable–faster, cheaper, quieter, and harder to contest than it is today. You do not need malicious intent for a powerful tool to cause serious harm. You only need the tool to exist and the constraints on its use to be weak.

This is why US constitutional architecture matters to everyone–why it should matter to everyone, and not only to technologists or civil libertarians, but to every institution that has ever looked at a government demand and said: there are things we will not do

A longer game is being played here, and it concerns me more than any single contract.

The United States is quietly assembling what might be called a sovereign AI tack: a fusion of state authority and corporate cloud and AI infrastructure that centralizes enormous power over data, perception, targeting, and decision-making in a layer that Congress neither designed nor clearly controls. When that stack is fed by pervasive surveillance, governed by the executive-corporate nexus, and tied to frontier AI systems with poorly bounded capabilities, it creates a path around the normal friction of democratic governance–around bicameral negotiation, public debate, judicial scrutiny, and the slower deliberative processes that exist precisely to prevent the concentration of unchecked power.

Policy can be implemented as code and contracts long before–or entirely instead of–statute. The more a society’s critical functions run through this stack, the easier it becomes to hollow out democratic institutions while continuing to speak their language.

What I am describing is a structural tendency that is already in motion, in procurement documents and legal interpretations that most elected officials have not read, and most citizens lack awareness. A sovereign AI stack left unaddressed by law does not need to be malicious to erode democracy. It only needs to be faster than the legislative process—and it already is.

I am not asking you to ignore legitimate national security concerns, nor am I pretending our adversaries are standing still. I am, rather, asking you to insist that the United States pursue its security without sacrificing the democratic architecture that makes that security worth having.

Require senior DoW officials and executives from major AI labs to testify, in public, about the terms under which their systems may be used for surveillance, targeting, and autonomous operations. Make the standard “lawful use” clauses visible to the degree possible. Unaccountable power is a threat to national security.

At a minimum, Congress should establish clear statutory prohibitions–enforceable in court–on using frontier AI systems for mass, suspicionless domestic surveillance of U.S. persons, and on deploying fully autonomous lethal systems that can select and engage human targets without meaningful human control and accountability. These limits should not live in internal Pentagon policy or private contractual language. They should be the law.

Any deployment of frontier AI into intelligence or lethal-force workflows should trigger mandatory reporting and review by Congress, and where appropriate, independent oversight bodies. “Any lawful use” should not function as a blanket carve-out. Specific categories of use should be subject to affirmative legislative authorization.

Investigate the supply chain risk designation applied to Anthropic and ensure that federal procurement is not being used to retaliate against firms that insist on stronger civil liberties and safety safeguards. Create affirmative incentives–not just the absence of punishment–for defense contractors who embed higher-law standards into their systems.

Begin building a bipartisan framework that recognizes how a sovereign AI stack, left to develop without democratic constraint, can undermine bicameralism, federalism, and individual civic standing. Where AI infrastructure becomes part of the state’s nervous system, democratic controls must be stronger, not weaker.

I am a Christian. I do not expect the United States to legislate theology, and I am not asking it to.

But I do believe that our tools–including our most powerful ones–must ultimately answer to something above raw expedience. Our Constitution, the laws of war, and the basic moral recognition that we must not normalize total surveillance or machine-delegated killing. These are not sectarian commitments.

Our current policy trajectory says: if it can be fit under “any lawful use,” as interpreted behind closed doors by executive branch lawyers, it is acceptable to build, buy, and deploy. That is not a standard. That is an abdication.

As a veteran, I know there is no way to participate in war without moral paradox. I have made peace with that, as much as anyone can. But the outer limits of what we permit ourselves to do–the lines we will not cross regardless of what we could technically justify–are supposed to be set by the people’s representatives, in public, through deliberation. That is not a naive ideal. It is the design of the republic.

You have the authority to change the trajectory we are on. I am asking you to use it: bring these contracts into the open, set real limits in law, and ensure that the AI systems being woven into our security apparatus serve democratic order rather than quietly supplanting it.

The machines we build will carry the values we embed in them.

David Michael Moore is a former U.S. Army Reserve Logistician and a technologist working with advanced AI systems.

This letter was edited using the generative AI models Gemini 3.0 and Claude Sonnet 4.6.